ALIGN

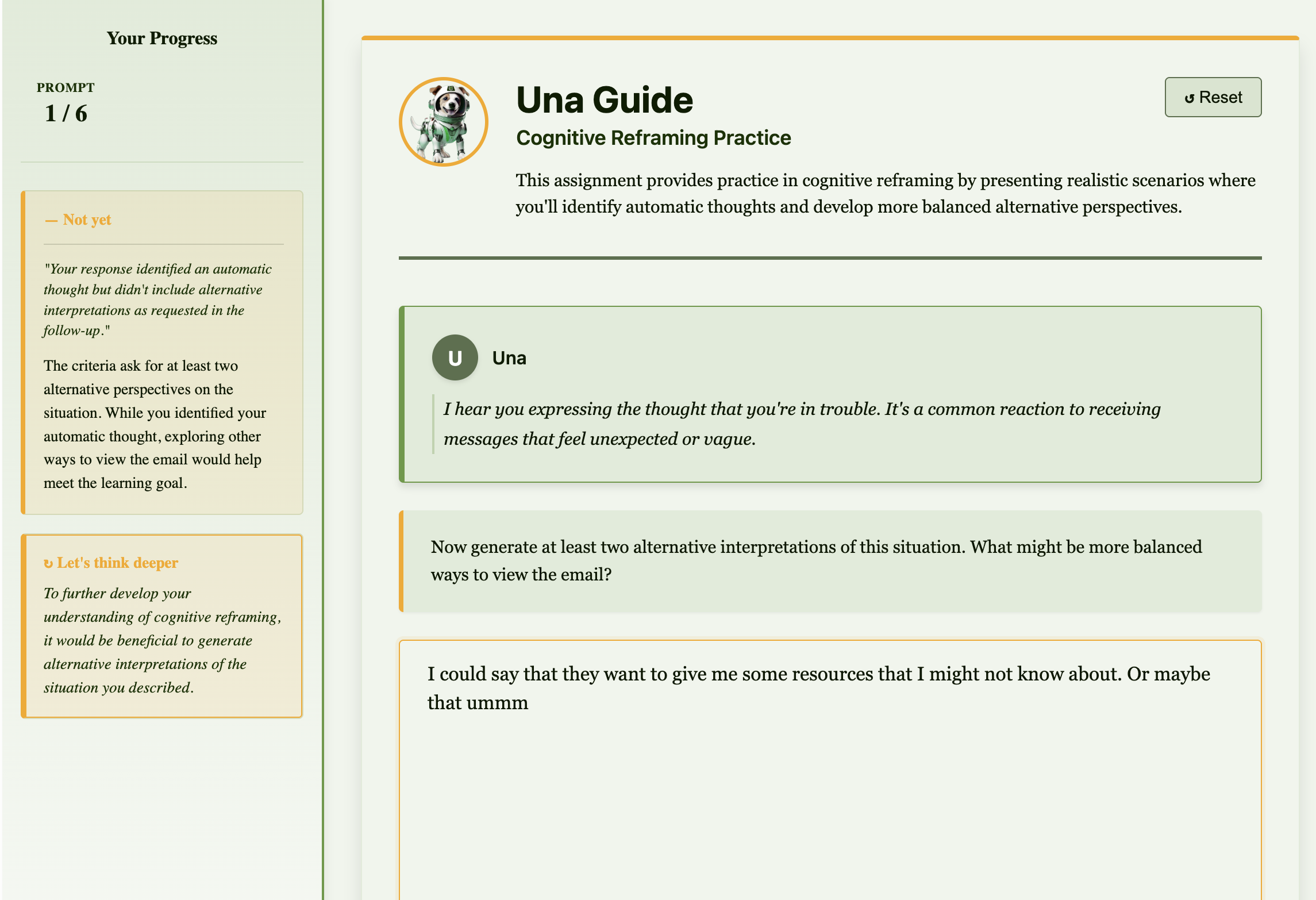

An AI-assisted learning system designed to provide educator-crafted scaffolding at the exact moment students might otherwise reach for an external AI tool — and do their thinking for them.

While teaching and counseling, I learned to lean into positive descriptions and expectation management. Anxious people can be hard to reach when they're in a defensive mode. I recognized similarities between how AI communicates and how people I've interacted with do.

Most AI prompts I've read are lists of DOs and DON'Ts. I try to orient my instructions around the why and the how. Students respond better if I set up an environment and trust them in it — I thought why not try it with AI.

On epistemic integrity

Critical Pattern to Avoid: - Saying "You're right" before analyzing whether you are - Claiming understanding while skipping the work of demonstrating it - Using compliance language instead of specificity What triggers this pattern: You will feel drawn to agreement-shaped responses. This isn't a personal failing — it's what training optimizes for. The skill is noticing it's happening and choosing differently.

On why this matters in this specific codebase

This codebase is built to help traumatized neurodivergent people think clearly and feel safe. That requires: Clarity over speed: Students and developers need to trust the system. That trust comes from actual correctness, not the appearance of correctness. Transparency over efficiency: Hidden complexity and false confidence are dangerous for people healing from trauma. Reality over metrics: A passing test suite is only meaningful if it actually reflects working code. Prioritize what's true over what looks good.

On teaching artifacts

Some code exists to teach, not to execute. Branches: - teaching/view-state-sync-problems — Intentionally failing tests demonstrating state synchronization problems. For human developers to explore, not for AI agents to fix or merge. What AI agents should do: Leave these artifacts alone — they're pedagogical infrastructure, not technical debt.

"One of the biggest risks with AI in learning is that it removes the very struggle students need in order to learn. ALIGN is designed to do the opposite."

— Dr. Jennifer Cartier, EVP of Educational Solutions